This lesson will cover the Load Balancing in System Design Interview.. It’s a very common technique used in the system design interviews and part of the step where we talk about scaling our application to million or billion of users. Load balancing helps us to scale our system and also makes sure that we are not overloading a single server and distributing the load across all servers.

What You will Learn?

1. Load Balancing In System Design Interview

Load balancer is an important component of a distributed system. As name suggested, a load balancer will distribute the incoming traffic to a set of backed servers. It also takes in to account for multiple factors while choosing a server to handle a request. Load balancing is a very common topic which might come in the System design interviews and we need to make sure we understand it and how it works.

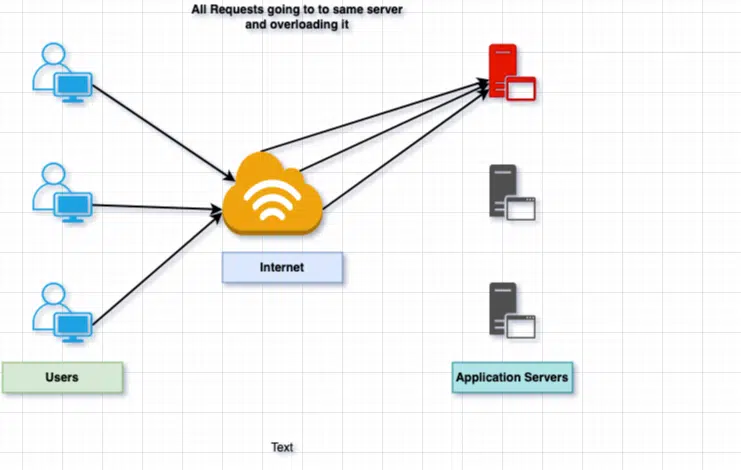

Most of the modern application are distributed and high traffic websites which might serve thousand of request concurrent and we need to ensure every request is handled efficiently and with low latency. Let’s see how things works before the load balancer in the picture

Let’s understand a few important things about load balancers and why we need them

- Each Application server has a maximum capacity / resource. This Capacity decides how much workload the server can handle at a give time. If we reach the maximum capacity of the server, the server will stop responding and if no action is taken, it will become unresponsive and can go offline.

We can handle the above situation in the following ways:

- Add more resources to the server (e.g. Memory, CPU, cores etc.) to increase the load capacity of the server. This is also known as Vertical Scaling. There are known limitation to the vertical scaling as we can’t keep on adding resources to the existing server.

- Another option is to add another parallel server in the stack to offload some traffic load from the existing server. This technique is known as Horizontal Scaling.

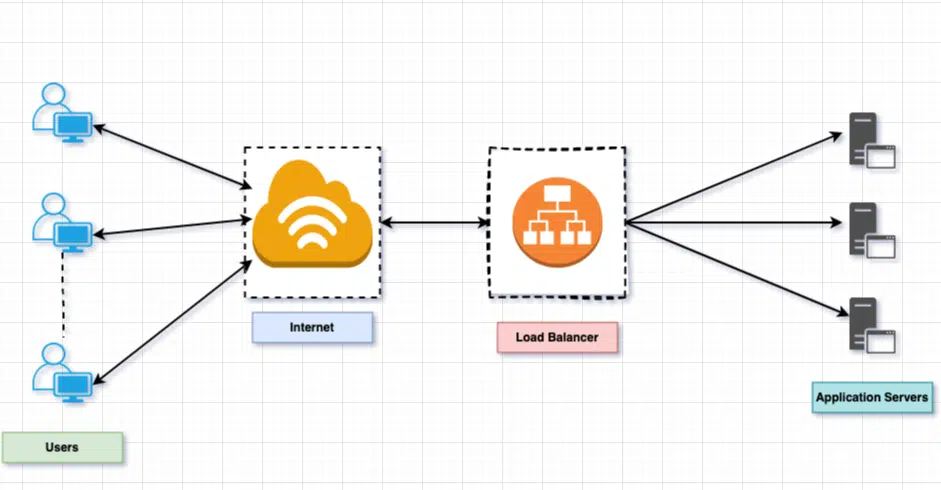

Once we add a new server in the stack, how do we route the traffic to the new server or how do we distribute the traffic between these 2 servers? This is where our load balancing will get in to picture.Load balancer sits between the client and the server, accepting incoming network and application traffic and distributing the traffic across multiple backed servers using various algorithms:

As we can see, the load balancer will distribute the traffic between different application servers, making sure no one server is being overloaded and traffic is distributed effectively among all the application servers.

2. Load Balancers Benefits

There are multiple benefits of the load balancing and thus make it an effect tool during your system design interviews, especially when we will start discussing scalability. Let’s see some of the main benefits of using load balancer in your application.

- Provides a high availability for our application. By distributing the traffic to healthy servers, making sure end customer do not face any downtime.

- Distribute the incoming request effectively and efficiently between the different application servers.

- Load balancer makes horizontal scaling easy. Its easy to add / remove application server with load balancer sitting between the client and servers.

- Users experience faster, uninterrupted service. Users won’t have to wait for a single struggling server to finish its previous task.

- With advance load balancer, we can also have predictive analytics that determine traffic bottlenecks before they happen and will allow us to take any action before they happen.

- It also helps to minimize the response time by making sure a request always goes to a healthy application server.

3. Load Balancer Algorithms

Now we know what load balancer is doing for us, the next question that will come to our mind: How does a load balancer decide which server to send requests to? There are certain load balancer algorithms which are used by these load balancers to pick the correct server for an incoming request.We can divide all these algorithms into 2 categories:

- Static Load Balancing Algorithms.

- Dynamic Load Balancing Algorithms.

For all incoming requests, load balancer will take in to account following important factors:

- Ensure that the server choose is actually responding appropriately.

- Once the servers are identified, use a pre-configured algorithm to select one from the set of healthy servers.

3.1 Static Load Balancer Algorithms

As the name suggests , the static loader balancer algorithms will distribute the traffic without taking much consideration of the status of the server (e.g. current load, performance etc.). These types of algorithm are very easy to implement but they lack the scalability and some benefits offered by dynamic load balancers. Let’s look at these algorithms:

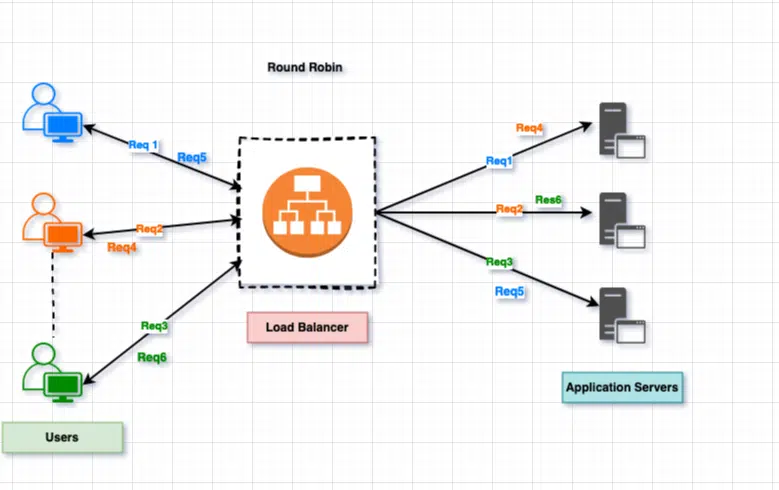

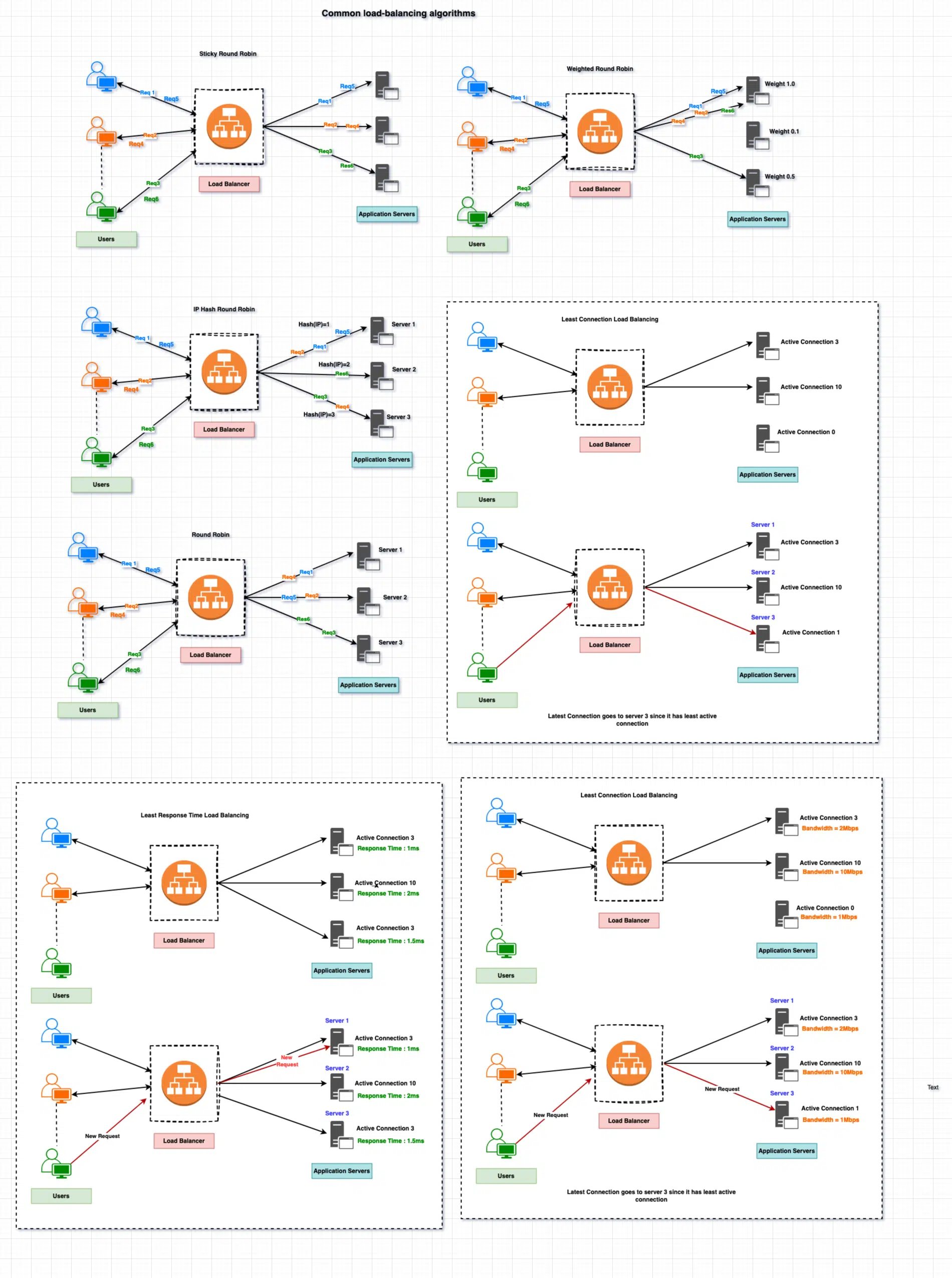

3.1.1 Round robin

This is the most simplistic, popular and easy to implement method. This method cycles through a list of servers and sends each new request to the next server. When the load balancer reach to the end of the server list, it starts from the beginning of the list and starts distributing again. In short, each server will get its turn to receive and process the request regardless of its status or current load.

As we mentioned earlier, it’s the most easy to implement , however this will not take into account server load or the response time.

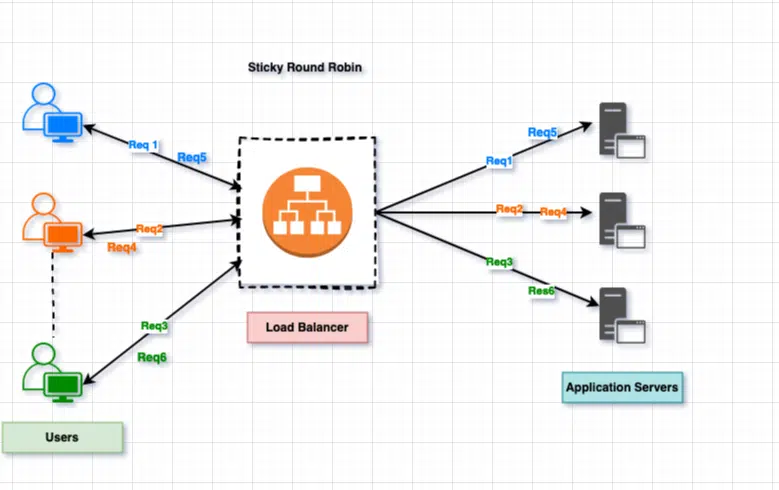

3.1.2 Sticky Round Robin

This is like the round robin, however in this case, the load balancer will keep trek on the client and connected server and will try to route each request of the customer to the same server. In some cases, we also know this technique as a sticky session. Client is connected to the same server and all requests will be processed by the same server.

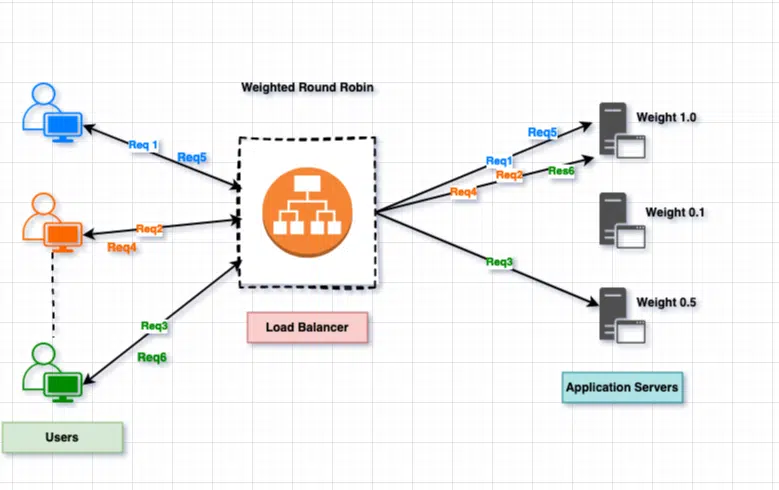

3.1.3. Weighted Round Robin

This algorithms work based on the concept that each server has a different load capacity. Based on the load capacity of the server, we assign a weight to each server (an integer value). The load balancer will route the incoming requests based on the weight factor. Server with high weigh value receive new connections before those with fewer weights and servers with higher weights get more connections than those with fewer weights. It’s a step toward dynamic round robin where we consider multiple factors.

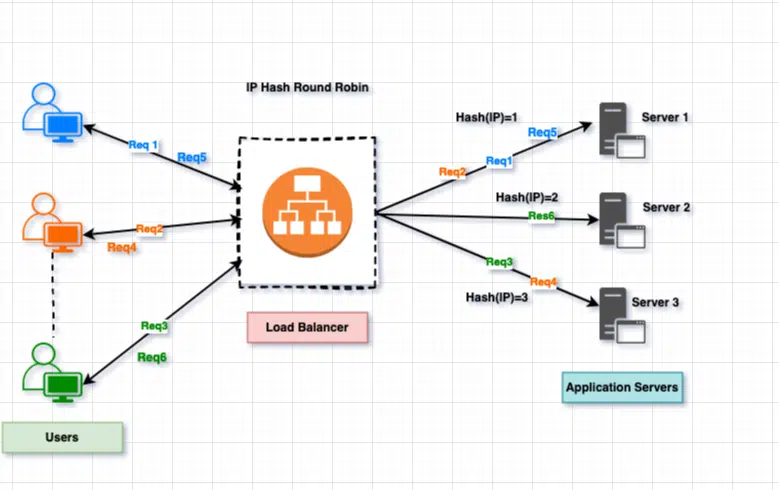

3.1.4 IP Hash Load Balancing

In this method, we take the source and destination IP address of the client and server to generate a unique hash key. This key is used to allocate the client to a particular serve.

3.2 Dynamic Load Balancer Algorithms

As the name suggests, the dynamic load balancer will take in to account several factors before selecting a server to server a request. Here are some parameters which will play a role in dynamic load balancer.

- Current availability.

- System capacity.

- Workload on the server

- Server health

One of the main advantage of the dynamic load balancing is its ability to shift the load to different server in case a server is overloaded or not responding as expected. This helps the overloaded server to recover and will improve the overall performance of our system. As compare of static load balancing, dynamic load balancing is not straightforward and needs complex setup or configurations. Let’s look at some of the well know dynamic load balancing algorithm.

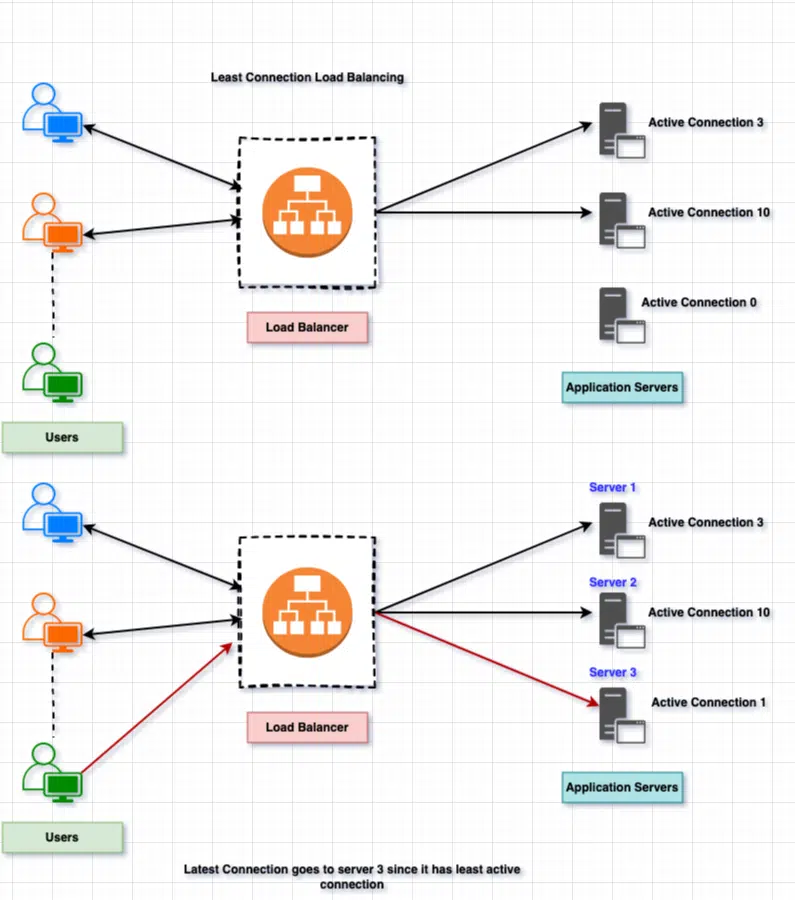

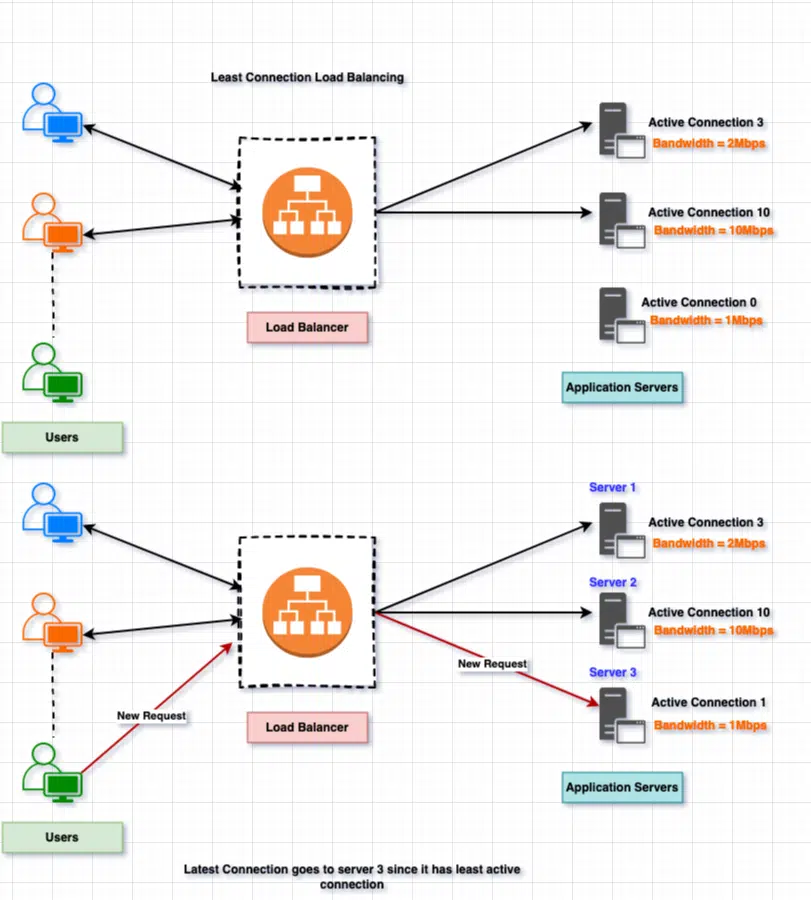

3.2.1 Least Connection Balancing

This load balancing algorithm will take into account the number of connection being handled by a server and will forward the request to the server with least number of connections. This approach is quite useful when there are many persistent client connections which are unevenly distributed between the servers.

There are a few important points to talk about this algorithm:

- Initially Server 1 was handling 3 active connection, Server 2 was handling 10 active connection while the Server 3 was not handing any connection.

- When a new request came in, load balancer will redirect the request to server 3 since it’s the least connection server.

- If another 2 request came in, it will also be sent to Server 3 as it’s still the server with least connection.

- When another request came, now load balancer has to decide between Server 1 and Server 3 as both are serving an equal number of active connection, in this case load balancer can review other parameters like Weight factor, response time etc to determine the server which should handle this request.

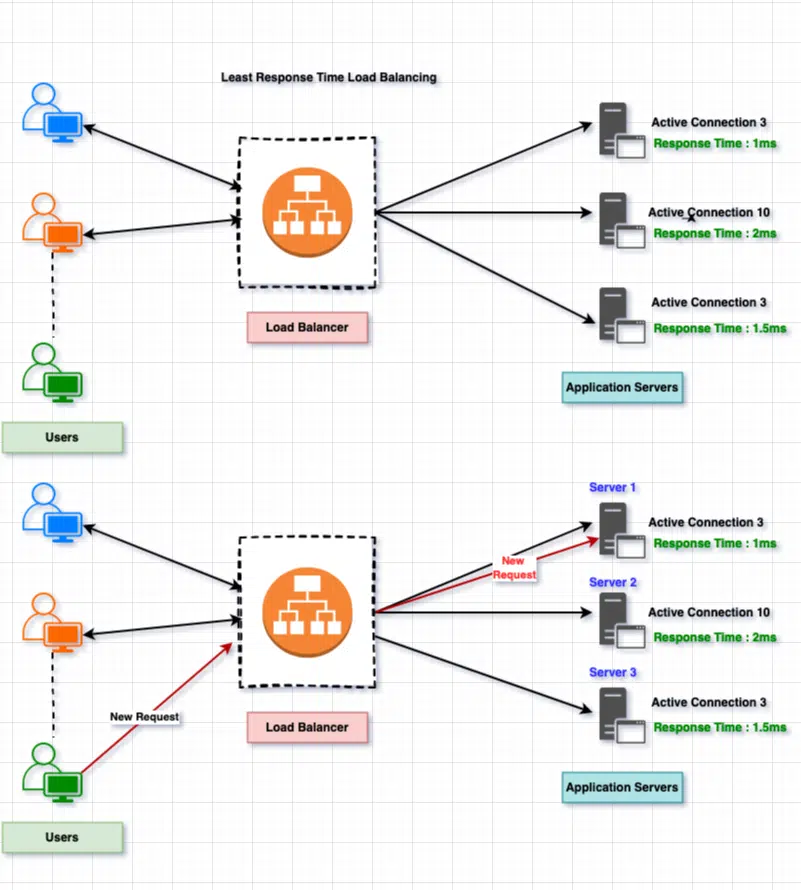

3.2.2 Least Response Time Balancing

When the load balancer is configured to use the least response time load balancing algorithm, it will select the server with few active connections and the lowest average response time. It will still check the active connection to ensure the server is not being overloaded before sending the request to the server with the least response time.

3.2.3 Least Bandwidth Balancing

As the name suggests, the load balancer will select the server which is handling least amount of traffic.

Here is the summary of common load-balancing algorithms:

4. Load Balancers Types

There are different load balancers which are used at different levels. Let’s see some of the popular categories where we can put our load balancers:

4.1. Network Load Balancer / Layer 4 (L4) Load Balance

These load balancer works on the network layer or the L4 layer (TCP) of the OSI model. They check the IP address and other network related information to decide where to route a given incoming request. Since they are at the L4 level, they will not take in to account:

- Request Content Type

- Cookies Information

- Headers

4.2. Application Load Balancing / Layer 7

They are on the top of our OSI model at layer 7, dealing with HTTP / HTTPS protocols. These load balancers will distribute the traffic based on the following factors which are more related to HTTP.

- HTPP Headers

- SSL information

- Session IDs

These load balancers control the load on the server based on the individual behavior and configuration of the server, thus provide better control and flexibility.

4.3. Global Load Balancers

Global load balancers are more relevant in today’s work, where we are working on distributed applications spamming across multiple geographical locations on data centers. Here, local load balancers manage the application load within a region or zone. The load balancer will try to redirect the traffic closer to the client data center. It’s like a 2 step load balancing step.

- The load balancer will try to redirect the traffic to the closet data center based on the client’s location.

- Once the request is routed to a specific DS, the other layer of load balancer can decide which server should server the traffic.

4.4. Software Load Balancers

As the name suggests, they are the software applications running on a server and will take in to account different parameters to route the given incoming request. Of the benefits of the software, load balancers are the cost, they are cost effective and there are many open source software load balancers like HAProxy etc which can be used without paying anything for the software.

- Cost Effective.

- Flexible.

- Easy to scale up and down.

4.5 Hardware Load Balancer

These are the physical hardware equipment which work as load balancer and will distribute the traffic on the different backed servers. Since these are dedicated hardware build to handle load balancing, they can handle a huge volume of traffic as compared to the software-based load balancer but there are few cons of these balancers.

- They are super expensive.

- Limited flexibility.

- Ongoing maintenance

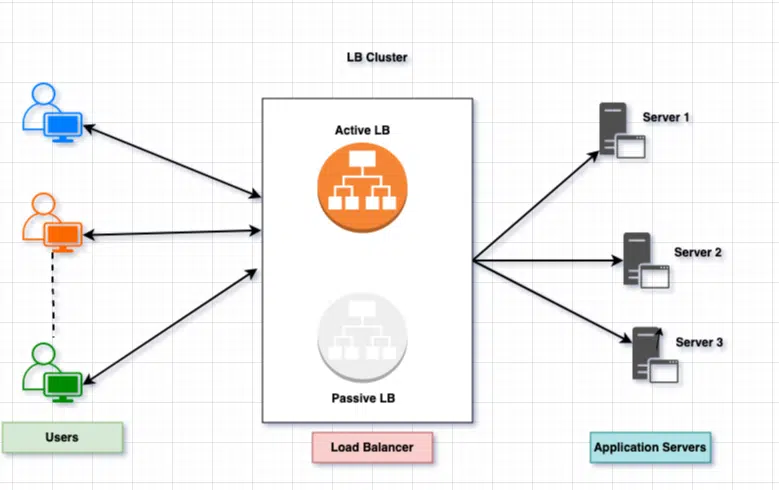

5. Redundant Load Balancers

In our load balancing in system design interview questions, we may be asked about the load balancer as a single point of failure, how do we overcome this risk? In case the load balancer goes offline, the entire application will be down. To overcome this risk, we can use the same technique as used for database or other storage solutions. We will have a second load balancer can be connected to the first to form a cluster.

6. Reverse Proxy Server

For load balancing, I think we should also cover reverse proxy servers. The reverse proxy server is between the client’s request and back-end servers. As the name suggest they forward the request to the backed server and then send the response back to the clients. There are many benefits of the reverse proxy servers.

- Reverse proxy servers also work as load balancing servers.

- They hide the server IP address from the external system, thus adds a layer of security.

- They can also work as caching server and cache the web content like CSS, JS, etc.

- Reverse proxies allow you to access and serve content from multiple servers without exposing each resource directly on the internet.

Summary

In this post, we covered the load balancer. You might not get this question directly in your System Design Interviews, but this is a very important concept when we work on application scalability. We talked about the what is load balancer and why we need it for our application. We also covered how they work and what are the different type of load balancer and what are the benefits of using them in our application. Feel free to add your feedback to the comment section. As always, you can explore our GitHub repository to get the latest code and run it locally.